They can be expressed as a two by two table (table 1). These are called a type I error and a type II error.Ī type I error is said to have occurred when we reject the null hypothesis incorrectly (that is, it is true and there is no difference between the two groups) and report a difference between the two groups being studied.Ī type II error is said to occur when we accept the null hypothesis incorrectly (that is, it is false and there is a difference between the two groups which is the alternative hypothesis) and report that there is no difference between the two groups. In trying to determine whether the two groups are the same (accepting the null hypothesis) or they are different (accepting the alternative hypothesis) we can potentially make two kinds of error. The alternative hypothesis is that there is a difference between the treatments in terms of mortality. The null hypothesis is that there is no difference between the treatments in terms of mortality. There are two hypotheses then that we need to consider:

The basic hypothesis for the studies may have compared, for example, the day 21 mortality of thrombolysis compared with placebo. Generally these trials compared thrombolysis with placebo and often had a primary outcome measure of mortality at a certain number of days. It was not until the completion of adequately powered “mega-trials” that the small but important benefit of thrombolysis was proved. For many years clinicians felt that this treatment would be of benefit given the proposed aetiology of AMI, however successive studies failed to prove the case. Power and sample size estimations are used by researchers to determine how many subjects are needed to answer the research question (or null hypothesis).Īn example is the case of thrombolysis in acute myocardial infarction (AMI). But how many do we need to study in order to get as close as we need to the right answer? Intuitively we assume that the greater the proportion of the whole population studied, the closer we will get to true answer for that population. However, another factor influences the possibility that our results may be incorrect, the number of patients studied. Clearly we can reduce the possibility of our results coming from chance by eliminating bias in the study design using techniques such as randomisation, blinding, etc.

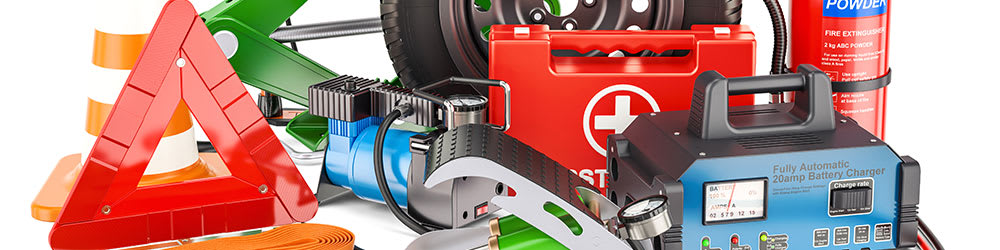

#WHATS IN EMERGENCY 20 SERIES#

In previous articles in the series on statistics published in this journal, statistical inference has been used to determine if the results found are true or possibly due to chance alone.

We then use this sample to draw inferences about the whole population. Nearly all clinical studies entail studying a sample of patients with a particular characteristic rather than the whole population. Power and sample size estimations are measures of how many patients are needed in a study.